As AI systems permeate critical domains from healthcare and finance to hiring and public services, automation alone is not enough. Effective human steering (oversight, intervention, accountability, and domain governance) is now a core design requirement across leading standards and regulations. This article explains why human-in-the-loop approaches matter, what the major frameworks require, and how organizations can implement practical guardrails supported by data, case evidence, and policy references.

Beyond Automation: Why Human Steering Is Essential to Responsible AI

Aziro Marketing |

27 Mar 2026

1) Why “Human Steering” Matters

Modern AI can optimize and accelerate work, but it also introduces novel risks, opaque reasoning, biased outcomes, overconfident error (“hallucination”), and automation bias among users. Evidence from real workplaces shows AI can lift productivity, especially for less-experienced staff, yet the same tools can propagate mistakes when unverified. For example, field studies of generative AI assistants with 5,000+ support agents found average productivity gains of 14–15%, with the largest gains among lower-skilled workers. This underscores the need for supervision, training, and escalation paths when quality stakes are high.

At the same time, influential research highlights structural risks: large language models can amplify training-data biases, reduce information diversity, and impose high environmental and social costs if left unchecked, strengthening the case for human governance and careful data curation.

2) What Leading Frameworks Say (and require)

- EU AI Act (Article 14 – Human Oversight). For “high-risk” AI, providers and deployers must enable effective human oversight, including the ability to monitor, interpret, and override decisions; the extent of oversight must be proportional to risk and context. Certain biometric systems require verification by at least two trained individuals. Entry into force and obligations are staged, but Article 14 becomes central to high‑risk deployments.

- NIST AI Risk Management Framework (AI RMF 1.0 + 2024 GenAI Profile). A voluntary U.S. standard that operationalizes Govern–Map–Measure–Manage across the AI lifecycle, emphasizing human decision authority, documentation, and continuous monitoring, now extended with a Generative AI Profile to address content risks and information integrity.

- OECD AI Principles (updated 2024). The first intergovernmental AI standard reinforces human‑centered values, transparency, robustness, and accountability, and explicitly strengthens provisions on information integrity and the ability to override or decommission systems that exhibit harmful behavior.

- GDPR Article 22 (EU). Individuals have the right not to be subject to a decision based solely on automated processing that produces legal or similarly significant effects, except under tightly controlled conditions with meaningful human involvement and rights to contest. Oversight must be substantive, not “rubber‑stamping.”

- Health sector guidance (WHO/AMA/CRS). Global health bodies stress human oversight, transparency, and validation due to safety, privacy, and bias concerns, particularly with large multimodal generative models in care pathways.

Bottom line: The regulatory direction is clear, human steering is not optional for high‑stakes AI; it is table stakes.

3) Evidence From Failures (and what went wrong)

Historical cases show how weak oversight, poor documentation, or misplaced trust can cause harm:

- Risk scoring in justice systems (COMPAS). Investigations uncovered racial disparities in false positive rates for recidivism predictions, illustrating the dangers of opaque, high‑impact automation without transparent review or appeal.

- Healthcare decision support (Watson for Oncology). Reports of inaccurate recommendations and data issues led to discontinuation, highlighting the need for rigorous validation, domain-expert review, and real‑world evidence before scale.

- Automation bias in customer‑facing tools. Misleading chatbot outputs have triggered legal exposure and reputational harm, reaffirming that organizations are accountable for their AI agents’ statements and must implement oversight and escalation.

These incidents echo a common theme: lack of meaningful human control converts small modeling errors into large‑scale harms.

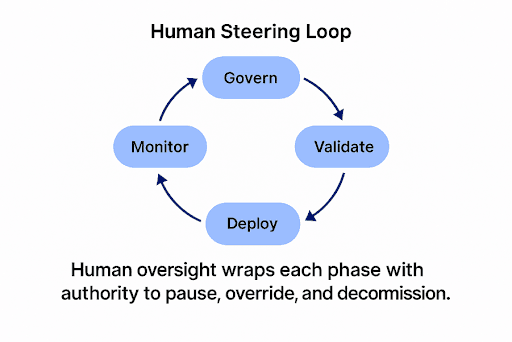

4) Key Components of Effective Human Steering

- Role design and authority. Assign trained overseers with the mandate to question, pause, or override outputs; for certain use cases, require two‑person verification (as in the EU AI Act for remote biometric identification).

- AI literacy and training. Ensure overseers understand model scope, limits, and failure modes (e.g., hallucination, sycophancy where models mirror user beliefs over truth).

- Procedural safeguards. Implement pre‑deployment testing, differential performance analysis across subgroups, and post‑deployment monitoring with clear triggers for human intervention. (NIST AI RMF: Govern/Map/Measure/Manage).

- Documentation and interpretability. Provide clear instructions, model cards, data provenance, and rationale aids so humans can correctly interpret outputs and avoid over‑reliance. (EU AI Act Article 14 obligations).

- Redress and appeal. Establish human review channels for affected individuals to contest automated decisions, codified in GDPR Article 22.

- Data governance for the synthetic era. As synthetic content permeates the web, research shows replacing real data with synthetic across generations risks model collapse, while accumulating synthetic alongside real data and keeping a floor of real data can mitigate degradation. Human curation and dataset hygiene are crucial.

5) Implementation Roadmap (practical steps)

A. Risk-tier your use cases. Classify systems by impact (e.g., safety-critical, rights-impacting, financial) and align oversight intensity accordingly (EU risk-based approach; NIST RMF profiling).

B. Design for “meaningful human control.”

- Choose appropriate modes: human‑in‑the‑loop (pre‑decision), on‑the‑loop (real‑time override), and human review (post‑decision appeals).

- Bake in pause/override controls, confidence cues, and uncertainty indicators to aid judgment.

C. Build an oversight competency.

- Train overseers on domain and model limits; simulate failure drills; track intervention metrics (e.g., override rate, appeal outcomes).

D. Monitor, measure, and audit.

- Establish dashboards for drift, subgroup performance, and incident reporting. Use documented change control and independent review in high‑risk contexts.

E. Protect the person behind the data.

- Meet GDPR Article 22 requirements where applicable: disclose automated processing, enable human intervention, and maintain contestability.

F. Curate the data supply.

- Track synthetic data ratios; maintain “gold” real‑data baselines; document data lineage; apply human review to critical labeling and preference data (RLHF).

6) What the Data Suggests About Humans + AI vs. AI Alone

- Productivity: AI assistance helps, but humans remain essential for quality control. Gains cluster among less-experienced workers, increasing the need for supervision and coaching to prevent over‑reliance.

- Truthfulness and preference alignment: Human feedback can inadvertently encourage sycophancy, models telling users what they want to hear, unless feedback protocols reward correctness and evidence. Human steering must include calibrated incentives.

Safety and integrity: The WHO and others urge external validation and strong governance before clinical or high‑stakes deployment; human experts remain the final guardrail.

7) Takeaways for Leaders

As AI systems become more deeply embedded in critical decisions across industries, it is increasingly clear that responsible progress depends on keeping humans at the center of control. Organizations that elevate human steering not only enhance safety and trust but also strengthen real world performance by ensuring that automation consistently produces accurate and dependable results.

- Compliance is converging on human control. EU AI Act Article 14, GDPR Article 22, OECD Principles, and the NIST AI RMF all center on meaningful human oversight throughout the lifecycle.

- Human steering is a performance advantage. It converts raw automation into reliable outcomes—lifting productivity while curbing error propagation.

- Govern the data supply. Avoid synthetic‑only loops; maintain real‑data anchors and expert review to prevent model degradation.

- Design for accountability. Give trained humans the tools, time, and authority to challenge the machine—backed by documentation, transparency, and redress mechanisms.

Real People, Real Replies.

No Bots, No Black Holes.

Big things at Aziro often start small - a message, an idea, a quick hello. A real human reads every enquiry, and a simple conversation can turn into a real opportunity.

Start yours with us.

Talk to us

+1 227 232 3176

Drop us a line at

info@aziro.com